Want better results? Start with social testing

When you’re talking about your brand’s social media strategy and someone asks why you approach some aspect of social in a certain way, what do you say?

- “Because we’ve always done it this way.”(This one is a career-killer, never say this.)

- “Because our audience seems to like it.”

- “Because we tested multiple approaches and found this one was by far the most successful in performance toward our goals.”

If you guessed that this article is going to get you to answer “C” every time, you’re right! Any time you have a hunch, question or challenge related to your strategy, social testing can help you generate powerful insights to support your next steps.

- If you want to make a case that you need more budget for video production, run a social media test on how different content types perform toward your KPIs like impressions or engagement.

- If you’re trying to prove that your audience isn’t responding well to a buttoned up, corporate-sounding brand voice, test copy written in different voices or experiment with including emojis.

- If you’re wondering whether increasing your publishing cadence will increase traffic from social to your website but don’t have a ton of free time, test it for a week and compare against a normal control week.

Instead of wondering what could be and hoping for the best results, develop a hypothesis, test specific variables on social and look to the data for logical and results-oriented insights.

Getting started with social testing

Social media tests are structured and measurable. They help you understand through a data-driven lens what works (and what doesn’t work) for your brand, rather than relying on blanket best practices or other people’s benchmarks. While you’re always looking at social media reporting to see how your content, engagement and publishing strategies are doing, social media testing allows you to identify specific variables and walk away with a clear picture of what will (or what won’t) drive success for your brand.

Before you begin your testing, make sure you’ve got some of your basic information down. This includes:

- An understanding of the overall goals of your business

- Written documentation of your current social strategy, including your general goals for each platform

- An understanding of your audiences per channel

- An overview of current performance

- A list of questions, hunches and ideas that you want to test

Reviewing these foundational documents and your current performance will help you identify the most important areas to focus on when it comes to testing. You don’t need to go from zero to testing everything at once—and in fact, running multiple tests at once, especially if you’re focusing on organic social, can actually lead to inconclusive results.

Prioritizing the hypothesis that can make the biggest impact on your team’s top-level goals and your overall business goals will help you test in a way that can make the largest difference for your brand. With that in mind, let’s discuss some common testing vocabulary, review a couple types of tests and walk through how you can develop your own.

Common testing terminology

As you set the foundation for social testing, it’s important to understand basic testing terminology. Here are a few common terms you’ll need to know.

- Hypothesis: A hypothesis is an assumption that you can use as the starting point for social media testing. It is generally based on limited information (you have some data or anecdotal evidence, but not enough to know your is statement correct) and is something that you can clearly prove or disprove through testing.

- Variable: A variable is an object or element that will vary, or change. In social testing, a variable might be something like the copy you use, the imagery you select or the time you post a message.

- Control or controlled variable: A control or controlled variable is something that stays the same throughout your test. The control is used as a point of comparison for checking the results of changing the variable you are testing.

- Metric: In social testing, a metric is a standard of measurement that you’re using to gauge results. Examples could include impressions, engagements or clicks on a post, but you can find a full list of social media metrics and what they measure here

- Statistical significance: Statistical significance is the likelihood that your test results are indeed caused by the variables you change and not due to chance. Developing a test with statistical significance requires a large enough sample size (for example, testing a variable using 100 messages rather than 10) and a clear control.

A/B testing

A/B testing is some of the most basic testing you can do on social media. The definition of an A/B test is a test where you change one variable and keep everything else the same. For example, if you want to learn which type of content results in the highest engagement on Instagram, you could test photo content vs. video content with the same caption or Story copy, posted at the same time on the same day of the week, one week apart.

When you change one variable in your posts, such as the content type, you compare the results of the test against each other. But two posts don’t make a test—because there are so many variables involved in one social post, e.g., time of day, media type, etc., you would need to repeat this experiment with different content, adjusting variables, several times to get conclusive results.

When you’re testing on social media, you don’t want to change too many variables at once. Because social media is already fast paced, social testing for time of day and copy at the same time might skew your results. In organic posting, A/B testing will need to be manual, such as deciding on which times you want to compare against each other. In paid posting, social ads might already offer a built-in A/B testing function.

As mentioned, A/B testing works best for single variables. If you’re testing strategy against strategy or campaign against campaign, you’ll need to focus more on the variables you can control so you can meaningfully compare them against each other. A/B testing will get you to a place of statistical significance, but there is no perfect test on social. Each moment is unique. For this reason, there is not only the science of analysis involved, but the art of interpretation, and that is on the social media manager to develop.

Here are a few examples of A/B tests that you could run on social:

- Time of day: Monday at 8:00 a.m. vs. Tuesday at 8:00 a.m.

- Content types: video vs. a link

- Captions: long vs. short

- Copy: question vs. statement

- Images: illustration vs. photography

If you want to go beyond a single variable test, that’s when you want to look at multivariable testing.

Multivariable testing

Multivariable social testing is basically a larger-scale A/B test (there are more letters of the alphabet, after all). Instead of one variable, you are altering multiple variables at a time. Because you’re testing more components, it can be trickier to analyze your data more definitively, and you’ll need a large enough audience to successfully test this. Paid social can be particularly helpful in reaching large audience pools.

In the above ad, Entrepreneur is testing two variables: the featured image and the title. Everything else—caption, link and subtext included—are the same. On the back end of this test, we would expect the target audiences, budget and timing would also be the same (segmented into different groups to receive each ad) so that the test is solely focused on the image and title copy and not influenced by different demographic makeups. In a test like this, a likely goal is to understand which combination gets the most clicks.

Multivariable testing examples you might see include:

- Different content types with different accompanying captions

- Same content but different audiences to see which ones respond the best

- Different call-to-action buttons with different featured images

- Different tones of voice paired with or without emojis

- Shorter animated videos vs. longer live action videos

Keep in mind that multivariable testing is not limited to ads. You can test these in an organic way and still receive results.

While you can run both A/B and multivariable tests manually and track your results in a spreadsheet, this approach is time-consuming and difficult to scale. It’s also a challenge to see results in real-time or quickly respond to your manager’s question about performance.

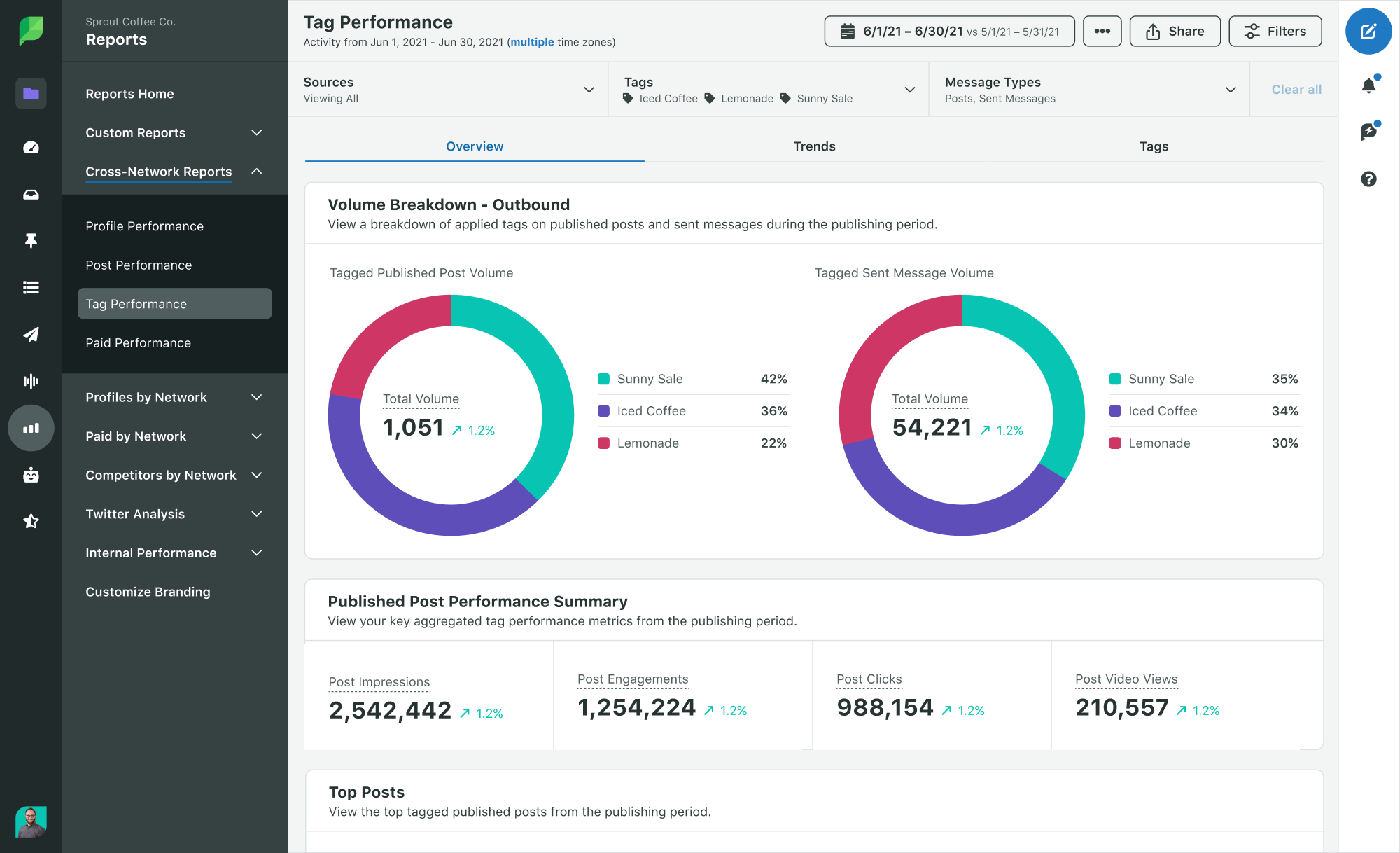

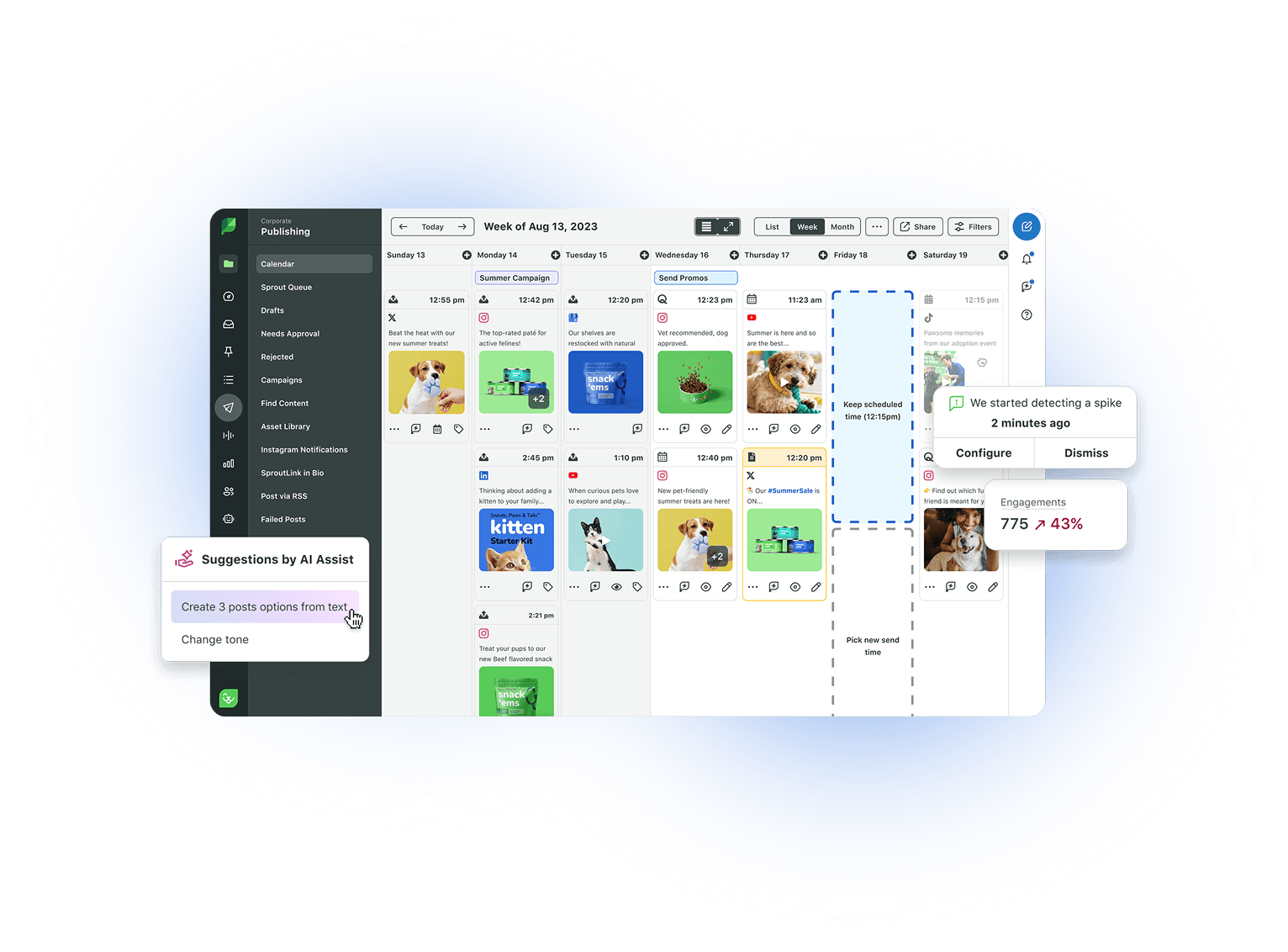

To efficiently run social media tests, we recommend using a tool like Sprout’s tagging and Tag Report to easily analyze your results. You’ll see immediately which tagged messages performed better overall in engagement and impressions. Because you’ll have more immediate results to your social testing efforts, you can proliferate them across your team and implement your findings in future campaigns faster.

How to run a social media test

Now that you know two ways to approach testing on social media, next would be understanding how to execute a test. There are five steps to executing a social media test as listed below. We’ll go more in-depth on the first step so you know more of what you can play with.

- Decide what you want to test and develop your hypothesis.

- Select the type of test: A/B or multivariable.

- Determine the duration of the test, the platform you want to test on and any variables that need to be controlled.

- Execute the test.

- Analyze and share the results and new ideas or next steps.

Sample 5-step social media test

Here’s an example of how you could walk through this process to help your team optimize your social media content strategy.

- Decide what you want to test and determine your hypothesis:

- I want to test which approach to sharing links to our blog content drives the most clicks on social.

- My hypothesis is that posting an image with the URL in the caption will drive more clicks than using the URL’s default metadata, thereby contributing to our overall business goals of increasing awareness through traffic.

- Select the type of test: A/B or multivariable.

-

- This will be an A/B test where I compare image-based posts vs. metadata posts. (See below for an example of a metadata post on the left and an image-based post on the right. These posts are not part of an A/B test so you’ll notice the copy is also different.)

-

- Determine the duration of the test, the platform you want to test on and any variables that need to be controlled.

- Our brand typically shares blog content most frequently on Twitter, so that will be the first platform I test on. I’ll run this test for a period of four weeks, using the same list of 10 blog posts, each of which will be posted four times (twice with metadata and twice with images). For a given blog post, written copy in the caption will be our controlled variable (the thing that doesn’t change) and only the media type will vary, whether the post includes an image or the blog’s metadata.

- Execute the test.

- So that I can see everything in one place, I’ll draft promotional copy for each of the 10 posts in one spreadsheet and assign consistent times and days of the week to when our brand shares each article. Then, I’ll upload all of these messages to our social media management platform (pro tip: try Sprout’s bulk uploading feature!) and make sure they are tagged. I’ll use “Test 1 – Metadata,” and “Test 1 – Image” as the two tags so that I can quickly analyze results.

- Analyze and share the results.

- Once the test has concluded, I’ll use Sprout’s Tag Report to assess average impressions, engagements and clicks for the metadata posts vs. the image posts (while our key metric is clicks and I’ll focus our reporting on that, we can still learn from other results in the process). I will summarize our key findings in an email to my manager, our content leadership and other members of the social team. This email will include whether or not my hypothesis was correct, and I’ll attach a PDF copy of the Tag Reports so anyone can verify the data and see sample messages from each group on their own. Additionally, I’ll propose what next steps we can take with this data in mind and what that new content would look like.

Whether your test illuminates a significant and obvious winner or actually shows little difference between your variables, it’s important to analyze your results, identify new opportunities and distill the insights into something you can keep for your own records. If you’re working with a team or need your manager’s buy in for further testing, it’s even more important to hone your communication skills and make sure you’re providing all of these insights for your entire team to benefit from.

Common tests to run on social media

So you know how to run an effective social media test, and you’re ready to get started. But where to begin?

Three of the most common variables to test on organic social are content type, copy and post timing.

Testing social content types

When testing content types, you’re comparing what works best with your audience on the channels you plan to test on, based on your goals.

The most important thing to establish up front is what key metric you are using to measure more successful content. Examples include:

- Impressions: which content type reaches the largest number of people

- Engagements (or specifically shares, comments, likes or clicks): which content type entices your audience to interact with your content in some way

- Traffic: which type of content drives the most new users to your website

You can track all of these metrics to generally understand what changes when you alter certain variables, but it’s important to have a clear picture in mind of what success looks like. In the example below, the Exploratorium is testing videos against an image, with two copy variations, for an event.

If you have an evergreen article or a relevant post on your website that performs well, you can turn that one post into a variety of different social content types in order to compare and contrast which perform best. In your own social media tests, you can take that article and create all sorts of content from it:

- A one-minute video of the author explaining the key takeaways

- An animated video of the key takeaways

- A quote graphic with a pull quote from the piece

- A plain text social post with quotes as text

- Image-based posts using screenshots that were included in the blog

- A Twitter thread

Try not to limit yourself when you’re experimenting around with content types. Just make sure you’re clear on which variables you’re testing and you’re setting up controls to make sure you can derive conclusive results.

Testing social post copy

When you break down a piece of social content, there are many parts to pay attention to and many different opportunities to test. In a video, for example, you’ll have:

- Featured freeze-frame (thumbnail or title screen if you have one)

- Video title

- Subtitles (when available)

- Post caption

- Call-to-action button (if paid)

Perhaps you’ve read that you should write more headline-oriented copy for your videos. If you test two different headlines but keep the rest of the content the same, then you’ll be able to see which performs best.

Like we’ve discussed, you also need to identify the variables you will control, or keep the same—if you’re testing social headlines specifically, you’ll want to control other things like the date/time you post and the thumbnail image of the video.

In the above video example, perhaps you can compare these headlines from one Monday to another Monday but posted at the same time for the same audience.

From a photo essay showcasing the sartorial choices of N.B.A. draft day to a portfolio looking at ice stupas, the best New Yorker photography of the year. https://t.co/0a9pf9HBTS

— The New Yorker (@NewYorker) December 15, 2019

A selection of our favorite photo commissions published in the magazine this year. https://t.co/xy0Zn9fzfg

— The New Yorker (@NewYorker) December 15, 2019

Twitter lends itself to the ease of testing different messages. In both of these Tweets, the New Yorker kept the link and image and changed the text. Perhaps one will perform better in link clicks than the other. Or one will have more likes instead of retweets.

The idea of testing your message is to understand what your audience wants and how that matches with the initial goal you set.

Testing post timing

Is your audience full of night owls or early birds? Do they engage more on the weekend or during weekday lunch hours?

While Sprout does have some proprietary data on best times to post (generally and by industry), the way to find out the absolute best time for your brand to post is to test.

To make sure you’ll have statistically significant results, be specific about what you’re testing. If 10:00 a.m. posts tend to do well for you, test the same content posted at 10:00 a.m. on Mondays vs. Thursdays vs. Saturdays. Or if you’re trying to determine whether your posts reach farther when your target audience is likely commuting, test the same day of the week at 8:00 a.m. or 5:00 p.m. vs. 2:00 p.m.

If you want the benefit of optimal results without having to test, you can also try Sprout Social’s patented ViralPost technology, which enables you to queue up content and then publishes it at your audience’s most active times for engagement.

Conclusion

We’ve only listed three different ways to test on social media but there are loads more to play with. The possibilities are endless as social media and consumers evolve in their interests and intricacies.

Now that you know some basics of social testing, the world is your oyster. Choose one area of your social strategy that hasn’t been going well, or one hunch you’re hoping to prove, and design a test to get yourself the answers you need to optimize your approach.

With social media testing, you’ll be able to create a data-driven strategy to take your brand to the next level. And if you’re looking to dive deep into more social data, download our free reporting and analytics toolkit to learn more.

Share