AI ethics: How marketers should embrace innovation responsibly

Artificial intelligence (AI) isn’t just a sci-fi phenomenon turned reality—it’s a technological mainstay, developed over decades right underneath our noses. AI has actualized dreams of increased efficiency, with many brands already leveraging AI marketing over the past few years.

Although it has sparked excitement and enthusiasm, there are concerns surrounding the ethics of AI. Like many innovations, the tech industry’s vision for the metaverse had eerie similarities to media like Black Mirror and Snow Crash. And with works like Parable of the Sower, the Machine and I, Robot within the cultural zeitgeist, it’s understandable why sci-fi fans, researchers and technologists alike warn of the dangers of ignoring AI ethics.

In this article we’ll define what AI ethics are, why brands should be concerned and the top ethical issues facing marketers, including job security, misinformation and algorithmic bias. We’ll also share five steps to help you maintain ethical AI practices within teams and across the organization.

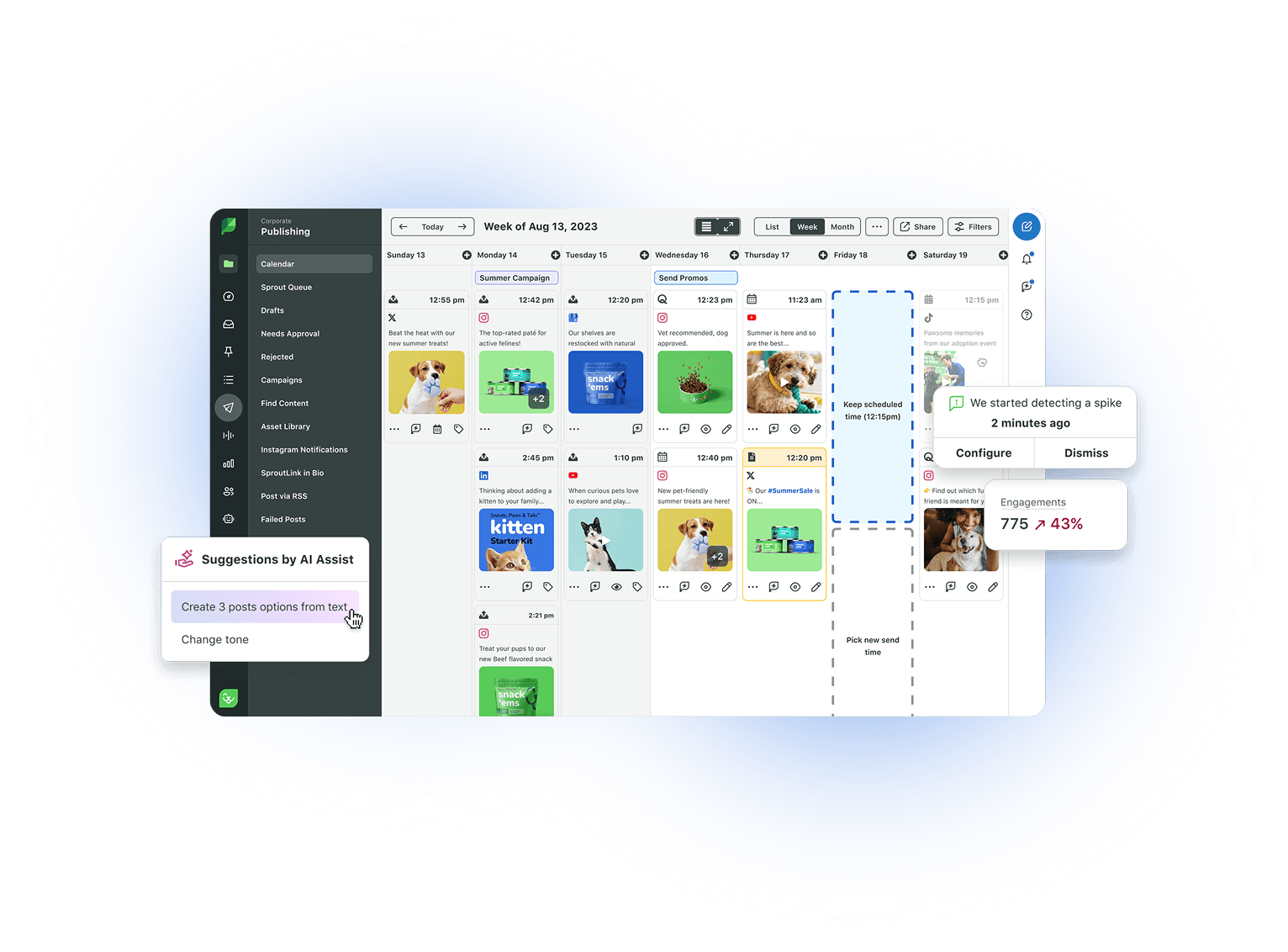

Try a Demo of Sprout Social's AI and Automation Tools

What are AI ethics?

AI ethics is a system of moral principles and professional practices used to responsibly inform the development and outcomes of artificial intelligence technology. It also refers to the study of how to optimize impact and reduce the risks and/or consequences of AI.

Leading tech companies, government entities like the United Nations and research and data science communities have worked to shape and publish guidelines to address ethical issues. For example, the United Nations Educational, Scientific and Cultural Organization (UNESCO) published the first global standard on AI ethics in November 2021: The Recommendation on the Ethics of Artificial Intelligence.

There are some AI regulations in place at the country and local levels, but as AI and other emerging technologies grow, businesses should expect more government regulation. As AI integrates further into our lives, AI ethics becomes a critical part of digital literacy.

Why AI ethics matter

Companies are already investing in AI, but the difficulty is ensuring responsible use.

According to The 2023 State of Social Media: AI and Data Take Center Stage report, business leaders expect increased investments in AI over the next few years. Our report also found 98% of business leaders agree that companies need to better understand the potential of AI and machine learning (ML) technology for long-term success.

While AI can improve performance, boost efficiency and generate positive business outcomes, brands are also experiencing unforeseen consequences of its application. This can stem from lack of research or biased datasets, among other reasons. AI misuse or neglecting ethical concerns can result in a damaged brand reputation, product failures, litigation and regulatory issues.

The first step to upholding ethical standards across teams within your organization begins with understanding the issues marketers face, so you can shape a plan to mitigate these business risks and safeguard your brand.

What AI ethics issues are top of mind for marketers

There are a variety of AI ethical concerns in the tech industry including, but not limited to, the following:

- False content generation

- Explainability

- Societal impact

- Technology misuse

- Bias

- Data responsibility and privacy

- Fairness

- Robustness

- Transparency

- Environmental sustainability

- Diversity and inclusion

- Moral agency and value alignment

- Trust and accountability

Some believe AI can help build more inclusive technologies, systems and services that can cater to diverse populations. The key is to establish ethical frameworks, regulations and mechanisms to ensure responsible use.

A member of the Arboretum, Sprout’s community forum, noted AI has the potential to promote inclusivity and reduce biases/discrimination by ensuring fair, unbiased decision making processes. By addressing issues such as algorithmic bias in AI development, it could be possible to shape a future where AI is a positive force of change.

Along with the potential for positive change, there are also opportunities for misuse or unethical use of AI as it becomes more powerful. Our community discussed several risks including privacy infringement, manipulation of public opinion and autonomous weapons.

Comments like these just scratch the surface of ethical concerns across industries, but the top issues for marketers include: job security, privacy, bias and discrimination, misinformation and disinformation, and intellectual property/copyright issues, which we’ll dive into detail in the next section.

Bonus Resource: Get our top five AI social media marketing resources in one convenient toolkit. Download it for customizable templates and tips to drive smart AI adoption in your role and across your organization.

Impact on jobs and job displacement

Robots securing world domination is the least of our worries—at least for now.

And that’s because researchers and experts are not threatened by technology singularity, or the idea that AI will surpass human intelligence and replicate traits like social skills. They are aware of AI’s limitations and the potential ramifications of job replacement.

The goal of researching and investing in AI isn’t to replace humans—it’s to help us save time and effort to do more impactful things. Flock Freight’s Director of Social Media and Partnerships, Bob Wolfley shared a great analogy for AI: “AI is like the dishwasher or washing machine in our homes. Think of all the time you save not washing dishes or clothes by hand.”

In our series Unread, members of Sprout’s marketing and creative team discussed how they currently use AI, from indulging in personalized shopping to using features like ViralPost® to help schedule social posts. Watch the video below to hear their hot takes on the benefits and fears of AI, including job replacement:

Privacy concerns

Concerns surrounding data privacy, protection and security are top of mind for brands. Security investments are an increasing priority for businesses as they seek to avoid any opportunities for surveillance, hacking and cyberattacks. As personalization becomes more popular, brands are implementing best practices for collecting, storing and analyzing data to protect customers and organizations.

Algorithmic bias and discrimination

Since it learns from data, a poorly constructed or trained AI can demonstrate bias against underrepresented subsets of data. There have been several large cases of bias with AI-generated artwork, chatbots, facial recognition software, algorithms and AI tools for hiring practices.

For example, several TikTok and Twitter [rebranded to X as of July 2023] users called out a thread featuring “#SouthSudan Barbie” adorned with guns, a negative stereotype associated with a region grappling with socio-political issues such as genocide and refugee crises.

With bias entering even lower stakes AI uses cases like this, the question becomes how do we work against bias and discrimination when the training datasets can lend themselves to bias?

Misinformation and disinformation

Like humans, AI isn’t perfect. AI responses to prompts can be inaccurate and there are fears of people spreading misinformation with malicious intent. Along with threats of disinformation, there’s potential for brand crisis and reputational damage, especially without the appropriate safeguards and protocols in place.

Intellectual property and copyright issues

You’ve probably seen the Harry Potter cast as characters in a Wes Anderson film or the citizens of Bikini Bottom singing renditions of popular songs. These are examples of how many are using AI to use people’s image and likeness or intellectual property.

AI is an excellent sparring partner for creative tasks like brainstorming and creating outlines, but depending on how the outputs are used, it could lead to copyright infringement, plagiarism and intellectual property violations. For example, a group of artists filed a lawsuit against Midjourney and Stability AI in January 2023 claiming the tools infringed on the rights of millions of artists. Generative AI opens a legal can of worms and there’s still a lot of ground to cover, but creating proactive rules and frameworks will help mitigate ethical risks.

5 steps to maintain AI ethics within teams

Here are five steps to help guide your plan for mitigating AI ethical risks:

1. Set internal ground rules and responsibilities for AI use

Consider establishing an AI ethics team of ethicists, legal experts, technologists and leaders to help establish ground rules for your organization. For example, only using generative AI for drafts and brainstorming, but not for externally published content is an excellent ground rule.

Along with these ground rules, define the role and responsibilities of each team member involved in AI, including the ethics team. Set your goals and values for AI as well. This will help set the foundation for your AI usage policy and best practices.

2. Define and audit the role of AI

AI can’t replace content creators, social media strategists or really any role in marketing. Identify the AI tasks that require human oversight or intervention and pinpoint the goals of your AI ethics policy to help craft processes for developing, managing and communicating about AI.

Once you identify the goals of your ethics policy, identify gaps and opportunities for AI at your organization. Consider the following questions:

- How does the organization currently use AI and how do we want to use it in the future?

- What software and analysis can help us mitigate business risks?

- What gaps do technology and analysis create? How do we fill them?

- What tests or experiments do we need to conduct?

- What existing solutions can we use with current best practices for our product teams?

- How will you use data and insights?

- How will we establish our brand positioning and messaging for AI technologies and ethics?

3. Develop an airtight vendor evaluation process

Partner with your IT and legal teams to properly vet any tools with AI capabilities and establish an ethical risk process. Their expertise will help you evaluate new considerations such as the dataset a tool is trained on and the controls vendors have in place to mitigate AI bias. A due diligence process for every tool before launching externally or internally will help you mitigate future risks.

4. Maintain transparency with disclosures

Collaborate with your legal and/or privacy teams to develop external messaging and/or disclaimers to indicate where and when your brand relies on AI. These messages can be used for content, customer care, etc. For example, TikTok updated their community guidelines to require creators to label AI-generated content. Communicating your ethical standards and frameworks for championing AI ethics will help gain the trust of peers, prospects and customers.

5. Continue education across leadership and teams

AI isn’t something business leadership can rush into. Like any new wave of emerging innovation, there will be a learning curve, on top of new technological milestones. Help level the playing field by hosting internal trainings and workshops to educate all team members, leaders and stakeholders on AI ethics and how to build it responsibly.

Do the right thing with AI ethics

Considering ethics isn’t just the right thing to do—it’s a critical component of leveraging AI technology in business.

Learn more perspectives from leaders and marketers on how AI will impact the future of social in our webinar, along with other findings from The 2023 State of Social Media Report and tips for creating impactful social content.

![A user response to an AI-generated photo of "#SouthSudan Barbie" in a Twitter thread[rebranded to X as of July 2023]. The post reads, "We keep telling y'all that bias is built into this AI-generated garbage."](https://media.sproutsocial.com/uploads/2023/08/Screenshot-2023-08-17-at-1.55.06-PM.png)

Share